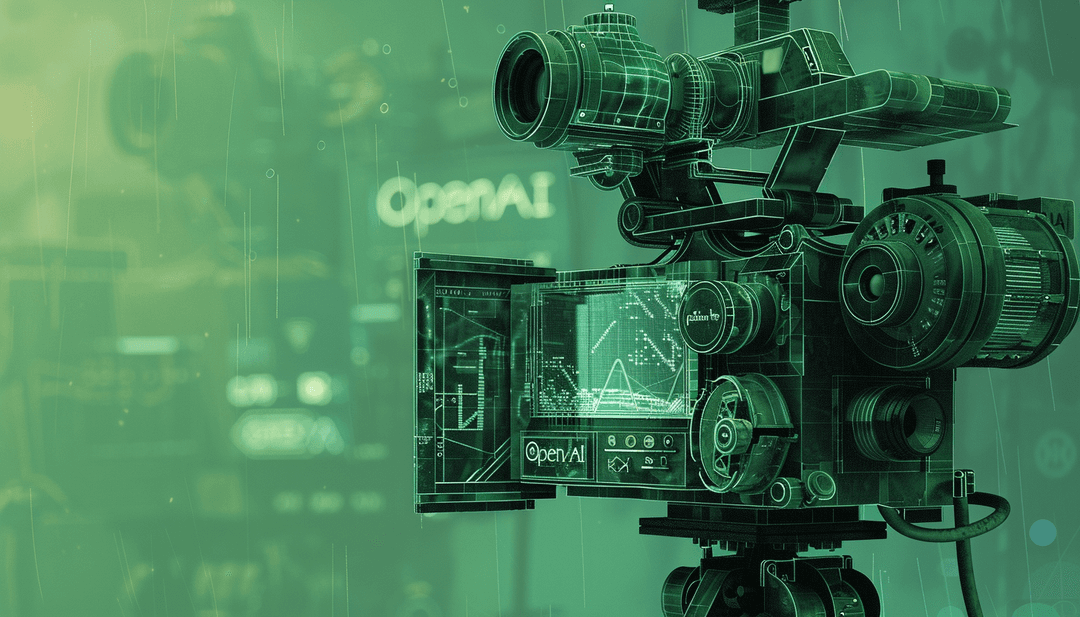

OpenAI Surges Ahead with New Video Generation Model

The swift evolution of AI innovations persists without pause. OpenAI has just unveiled a tech tease for one of the most anticipated advancements in the AI domain for this year.

Introducing Sora, a new generative video model developed by OpenAI. Sora possesses the capability to craft lifelike and imaginative scenes based on textual prompts. With the ability to produce videos up to a minute in length, Sora ensures both visual fidelity and alignment with the user's input. But, surely, Sora exceeds the boundaries of being merely a text-to-video model.

We’re teaching AI to understand and simulate the physical world in motion, with the goal of training models that help people solve problems that require real-world interaction.

What does it look like?

OpenAI has provided a selection of video samples for examination. Let's take a moment to explore these visuals. The close-up video featuring a man exhibits a variety of facial expressions, as outlined in the prompt. Renowned for its exceptional quality, the video provides a detailed perspective. It notably surpasses competitors like Runaway, Google, and Meta in video generation. These rivals can only deliver shorter, lower-quality videos by comparison.

Sora is able to generate complex scenes with multiple characters, specific types of motion, and accurate details of the subject and background. The model understands not only what the user has asked for in the prompt, but also how those things exist in the physical world.

This is what OpenAI says and here's another remarkable video sample from OpenAI's Sora to prove that, boasting high-definition visuals and intricate detail. Upon closer examination, it becomes apparent that the moving cars obscured by trees do not reappear accurately, despite OpenAI's assertion that Sora effectively manages occlusions.

Once again, let's examine another selectively chosen video showcasing the model's remarkable capability to comprehend the physical world.

Weaknesses?

OpenAI acknowledges that the current model possesses certain weaknesses, suggesting that it could be some time before it becomes readily available for use.

The current model has weaknesses. It may struggle with accurately simulating the physics of a complex scene, and may not understand specific instances of cause and effect. For example, a person might take a bite out of a cookie, but afterward, the cookie may not have a bite mark. The model may also confuse spatial details of a prompt, for example, mixing up left and right, and may struggle with precise descriptions of events that take place over time, like following a specific camera trajectory.

The release date?

It might take a while before we gain further insight. OpenAI's unveiling of Sora today is a technological preview, and the company states that there are no immediate intentions to make it publicly available. Instead, OpenAI will commence sharing the model with third-party safety evaluators for the first time today.

Today, Sora is becoming available to red teamers to assess critical areas for harms or risks. We are also granting access to a number of visual artists, designers, and filmmakers to gain feedback on how to advance the model to be most helpful for creative professionals. We’re sharing our research progress early to start working with and getting feedback from people outside of OpenAI and to give the public a sense of what AI capabilities are on the horizon.

You also might like: